Oh Mandy!

I wanted to use Rust to do some computational heavy work, so let’s compute

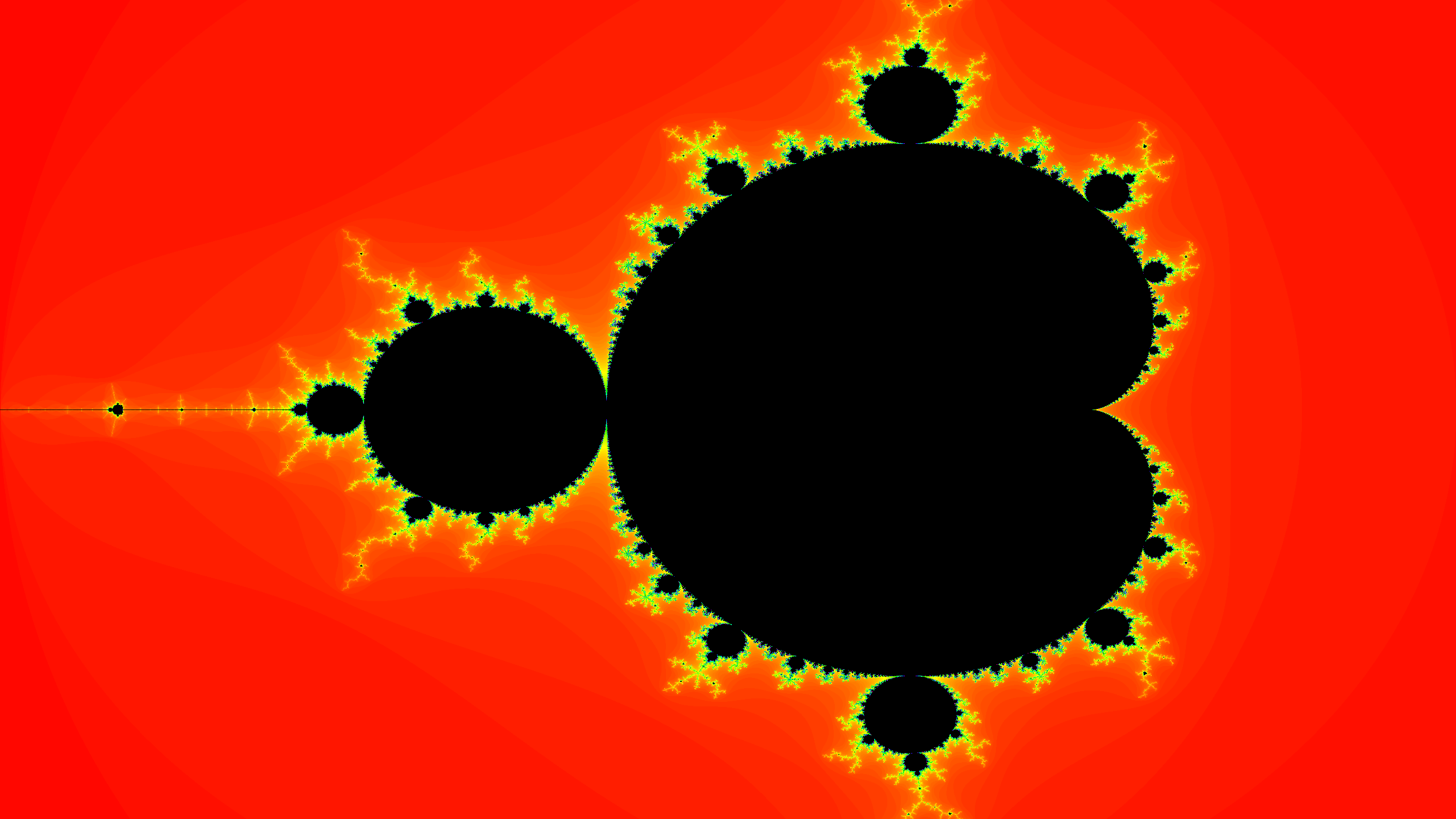

a mandelbrot. The mandelbrot set is a mathematical formula that forms a

fractal: when plotted it forms a shape that repeats itself, smaller and

smaller. The shape itself somewhat resembles a ladybug that mysteriously

emits lightning strikes. The mandelbrot exists in a plane of complex numbers.

Complex numbers are weird, as they have a real and an imaginary part.

It always helps me think of them as a ‘shadow’ of a vector in real space.

I wanted to use Rust to do some computational heavy work, so let’s compute

a mandelbrot. The mandelbrot set is a mathematical formula that forms a

fractal: when plotted it forms a shape that repeats itself, smaller and

smaller. The shape itself somewhat resembles a ladybug that mysteriously

emits lightning strikes. The mandelbrot exists in a plane of complex numbers.

Complex numbers are weird, as they have a real and an imaginary part.

It always helps me think of them as a ‘shadow’ of a vector in real space.

The mandelbrot set is defined by the formula:

zn+1 = zn2 + c

where z and c are complex numbers, and n represents an iteration count. Remember from math class that complex numbers can be written as a + bi. In Rust, we write them as

num::Complex::<f32>(re, im)

For plotting the mandelbrot we will map the (x, y) coordinates of the screen

to the complex number c as Complex(re: x, im: y). The mandelbrot lives at

-2.0 <= x <= 1.0 and -1.0 <= y <= 1.0, so we map the screen width and

height to this range. Then for every screen pixel we compute the mandelbrot

value.

The number z is computed with iterations; we will start at z(0, 0) and

iterate until z falls within or outside the set.

The value falls outside the set when |z| >= 2.0. When it falls inside

the set, the values will start to repeat and we can iterate until our socks

fall off. In practice we are not going to check for repeating values; instead

we’ll use a cutoff of MAX_ITERATIONS.

use num::Complex;

// compute mandelbrot value at c(mapped_x, mapped_y)

// Returns the number of iterations it took

fn mandelbrot(c: Complex<f32>) -> u32 {

const MAX_ITERATIONS: u32 = 200;

let mut n: u32 = 0;

let mut z: Complex<f32> = Complex::new(0.0, 0.0);

while z.norm() <= 2.0 && n < MAX_ITERATIONS {

z = z.powu(2) + c;

n += 1;

}

n

}

The shape of the mandelbrot already starts to appear after 20 max iterations;

it starts looking nice at 50, and the image below was produced with

MAX_ITERATIONS = 200.

When you plot only the condition of whether a point is part of the set, then it would produce a black-and-white image. Instead, map the number of iterations done to HSV colorspace in order to produce the image below.

Parallelization

I remember from my youth that computing a mandelbrot was a computionally heavy task. For modern computers however, it’s not a big challenge. Most CPUs nowadays can do a multiply-add in a single instruction, note however that we are dealing with complex numbers (which are a multiply-add in themselves).

The Rust code takes considerable run-time in debug mode, but compile and

run in release mode to experience a 10x speedup.

In any case, it’s easy to split up this task over multiple cores, and so

we can write a parallel version that uses the hardware more effectively.

Multi-threading in Rust is relatively easy, but (like often in Rust) it can be a bit cumbersome to come to the final solution.

let num_threads = std::thread::available_parallelism()

.unwrap().get();

dbg!(num_threads);

The function available_parallelism() basically returns the number of

CPU cores. It returns an impractical type Result<NonZeroUsize> when

all you really want is something like u32. Ah well, at least we can

unwrap and convert it, with the added risk of unwrap() panicking

in our face.

For the parallel workload you intuitively want to split the dataset over

multiple CPU cores, and let each thread fill in part of the data array:

let mut data = vec![0u32; (width * height) as usize];

let chunksize = height as usize / num_threads;

let mut tasks = Vec::new();

for tid in 0..num_threads {

let task = thread::spawn(move || {

let start = (tid * chunksize) as u32 * width;

let end;

if tid >= num_thread - 1 {

end = width * height;

} else {

end = start + chunksize as u32 * width;

}

// each thread computes a slice of data

compute_chunk(&mut data[start..end]);

}

tasks.push(task);

}

But alas, this code does not work!

The Rust borrow checker does not allow sharing data mutably across threads.

So therefore we give out neat slices of data to compute and make sure that

each thread receives its own slice, and does not step on another thread’s

toes. But again, the Rust borrow checker does not allow sharing a piece of

data mutably across threads.

The key to solving this problem is realizing that threaded code in Rust should be written in a purely functional style; rather than manipulating existing data, the function should produce new data:

fn compute_chunk(tid: usize,

width: u32, height: u32,

start_y: u32, end_y: u32) -> Vec<u32> {

assert!(end_y > start_y);

let size = (width * (end_y - start_y)) as usize;

let mut data = vec![0u32; size];

... do the computation ...

data

}

Now that each thread manages its own data, we have to join threads and

collect all the data, combining it all together into one large Vec

to produce the final image. Having to do the extra step has a performance

cost, but now the Rust compiler affirms that the code is safe.

The parallel version is indeed faster than the serial code:

- 4-core intel MacBook Pro: 3.2x speedup

- 6-core/12 threads i7: 5.9x speedup

- 8 threads MacBook M1: 4.9x speedup